Controlling memory with Google's ExoPlayer

Playback requires memory. Learn how to best control ExoPlayer to make efficient use for your application.

On Android, there are many areas of playback that you can’t control and you rely purely on the device’s capabilities. However, there is one area where you, as an application developer, are in control and given enough power to shoot yourself in the foot… memory. In this short article, we’ll take a look over how ExoPlayer manages its main playback buffer, and what you can do to influence it’s behaviour and requirements.

Let’s start by considering why we need additional memory for playback. If we consider playback by zooming out, we know that the following:

- The decoder has a number of input buffers that we’ll be asked to fill with encoded data

- The decoder has a number of output buffers that are filled with decoded data before it is rendered

- The input and output buffers are re-used, keeping overhead to a minimum

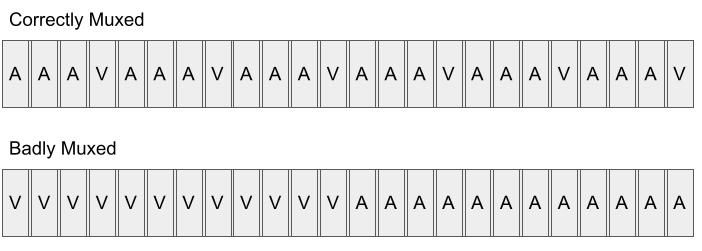

If an application only operated on the decoder's own input buffers, what issues would arise? We’ll start by considering where the data is coming from. A media file, for example an MP4, will contain a number of tracks which represent all the audio, video and text streams that are present. Each stream will have a number of samples that represent the encoded data for that specific track. Each sample has a duration associated with it, and all samples across all tracks are interleaved within the original file. Sometimes the tracks are efficiently interleaved, sometimes they are not. Here’s an example to illustrate the difference:

If, as a consumer of the file, we are required to quickly read the next buffer for a given track to feed it into the decoder, there is no guarantee that it will be the next buffer while reading sequentially through the file. To do this, it would potentially require lots of seeking which is time consuming. It would be hard, or even impossible, to read data from the source media file and populate these buffers fast enough to allow for playback with no buffering. This is why an additional buffer is required to ensure full efficient utilisation of the decoders.

ExoPlayer’s LoadControl

The key component of ExoPlayer that manages this shared memory buffer is the LoadControl. Before we look into the interface and the methods that decide how the memory is used, let’s start with where this memory is stored. ExoPlayer makes use of what they call an Allocator which is returned via LoadControl.getAllocator. One thing that you’ll notice is that it can be used to acquire memory allocations of a fixed size, something which the consumer can’t control. The fixed size is reported via Allocator.getIndividualAllocationLength but if you’re using ExoPlayer’s default implementation, this will normally be 64kb (as seen here). This leads to the question, what if we need more than a single allocation to fill a single sample? Why can’t we control the size of the allocations? Without going in too deep, we need to remember that memory is finite. We are also restricted to what contiguous memory allocations we can make. If we keep requesting a large amount of sequential memory, the device will quickly become unable to meet our requests, even though memory is still available. ExoPlayer internally creates a LinkedList of these smaller memory allocations and manages access across them. When requested to fill a sample buffer that spans more than one, it will read across allocations rebuilding the single buffer. If you want to understand more about how this is implemented, feel free to check out the implementation of the SampleDataQueue, specifically the AllocationNode class. At a high level, we can simply consider that each track contained within the file (video, audio, text, metadata, etc) will have it’s own SampleDataQueue, each internally storing the packets which have been extracted but not yet read by the renderer.

Let’s now look at some of the methods of the LoadControl interface, starting with those that allow the instance to make sensible choices internally based upon the state of the player as well as the tracks that have been selected:

- onPrepared(), onStopped(), onReleased(): These should be fairly self explanatory. They allow the instance to know the state of the player and the LoadControl instance, allowing us to potentially trim or release any held resources

- onTracksSelected(...): If we know which tracks are selected, both the type and amount, then it’s possible for us to determine how many bytes we think it’s reasonable for it to use as its allocation to our global playback buffer. By looking at all enabled tracks, we can compute a reasonable target size for our Allocator.

Next, let us consider how the LoadControl influences the decisions by the player as to whether playback should start, or resume after a buffering event as well as continue to load in the background. The following two methods are key:

- shouldStartPlayback(...): This method is called repeatedly as the player loads data, as well as when it’s temporarily paused after a buffering event. The passed in parameters allow the LoadControl to know the durations the player believes have been buffered, ready for immediate playback. There are two main scenarios when considering how to implement this method:

- VOD: For on demand video, we assume that we can buffer much faster than real time. This means we can set sensible targets for our buffers for which their loading times will purely depend on our network speeds. Generally, we will set an aggressively low buffer target to start playback, but if we detect a buffering event, we will increase that to try and ensure it doesn’t happen again.

- Live: For Live video, depending on our playback position within the Live window, it’s possible that to buffer 5 seconds of content could take as much as 5 seconds. Since we want to start playback as soon as possible, we may have to set lower targets accepting that we have a much smaller buffer size in front of the playback position.

- shouldContinueLoading(...): This method is simply telling ExoPlayer whether or not it should be continuing to feed data into the Extractor, outputting samples to the SampleDataQueue’s for each track. In the most simplest form, we can just decide this based upon how much our Allocator has actually allocated against what our target was, computed when we knew which tracks were selected.

Now that we understand these methods, we should have a reasonable idea how the logic of the LoadControl influences playback. One thing we haven’t considered though, is what happens to the packets (or samples) for each track after they have been passed to the renderer, do we simply throw them away? If we consider a few playback scenarios, this will help provide a bit more context. If your application allows the user to easily seek back by some preset value in order to rewatch something that they might have missed (e.g. a goal being scored) then obviously the best experience is that this is near immediate. If you’ve thrown away the data covering that time, then the player is required to fully stop playback, ask the data source to seek and re-extract those packets, starting playback after enough time has buffered. However, if you were able to keep that time within your active buffer window, this operation becomes a lot less destructive. We can simply move the playback position back in time and the data source and extractor remain untouched, continuing to load data from their current position in the future. Another scenario could be when the user decides to switch tracks, e.g. to a new audio track. This may happen inbetween key frames, so to ensure a smooth transition, having at least the previous key frame allows the player to easily continue playback. Now let’s look at the two different ways we can control this:

- getBackBufferDurationUs(): Returns the duration of samples that should be retained from the current playback position (or previous key frame, depending on retainBackBufferFromKeyFrame). This should be a constant value, as dynamic values are not currently supported.

- retainBackBufferFromKeyFrame(): Returns whether or not media should be retained from the current playback position, or from the previous key frame.

Track Starvation vs Seeking

One interesting difference that i’ve come across in the past, when comparing ExoPlayer’s behaviour to some other playback engines, e.g. FFmpeg, is that it optimises the extraction of the packets based upon IO. ExoPlayer will sequentially read the samples from the file, and just pass them onto the relevant tracks sample queue. One potential problem with this is that if the source media is badly muxed, it’s possible that we starve tracks. If one particular track has lots of sequential packets, that will use up a large percentage of our allocation amount, leaving less space for others. The alternative, implemented by FFmpeg, is to have the extractor seek around the data source more to ensure a balance across all tracks. While this handles poorly muxed data, it does come with a potentially large overhead of IO operations as it seeks around. In practice, players like MPV, will often implement a ring buffer in front of the IO so that the data source can sequentially read, but the extractor is seeking around an in memory buffer. This is great for scenarios where you have lots of available memory, but on some constrained devices (like Android), it can be problematic. At the end of the day, the best approach depends on your specific scenario and how much control you have over the original media.

If you have any questions or requested for follow up posts, please feel free to ping me via Twitter @IanDBird.